What are the deepest customer value progressions and most sophisticated applications in today’s AI Voice journeys? This is the third of three articles exploring customer benefits, and is an extract from CPaaSAA’s recent report AI Voice: Who Will Run The Conversation? Part 1 gave an overview of business benefits demonstrated by members’ case studies, and showed the main types of applications in use and the main pattern of customer progression. Part 2 gave an overview of where, how and why customers start their journeys, in transcription and concierge-type services. You can download the full report here.

This article outlines some of the most advanced application types for AI Voice, illustrated with case studies.

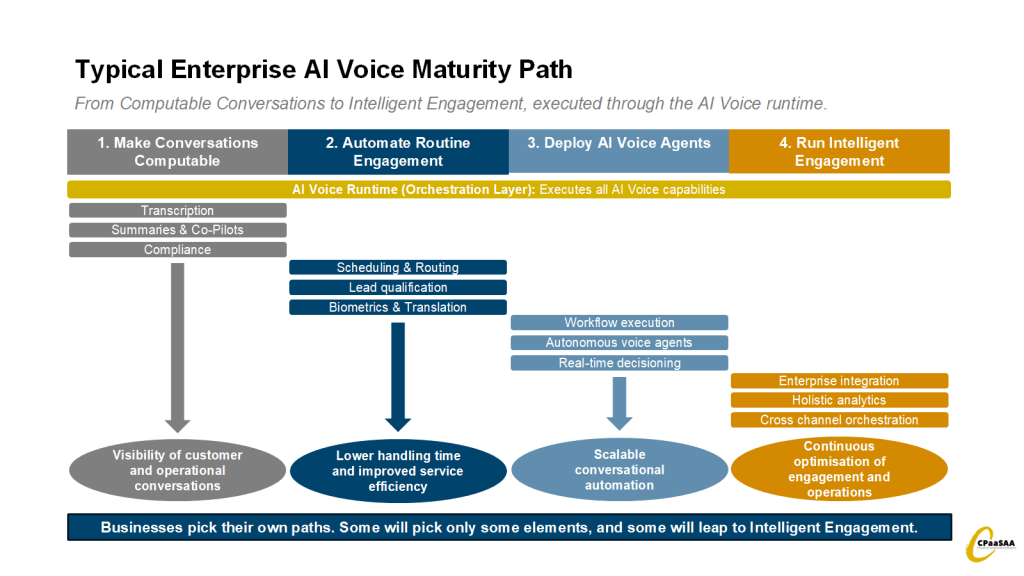

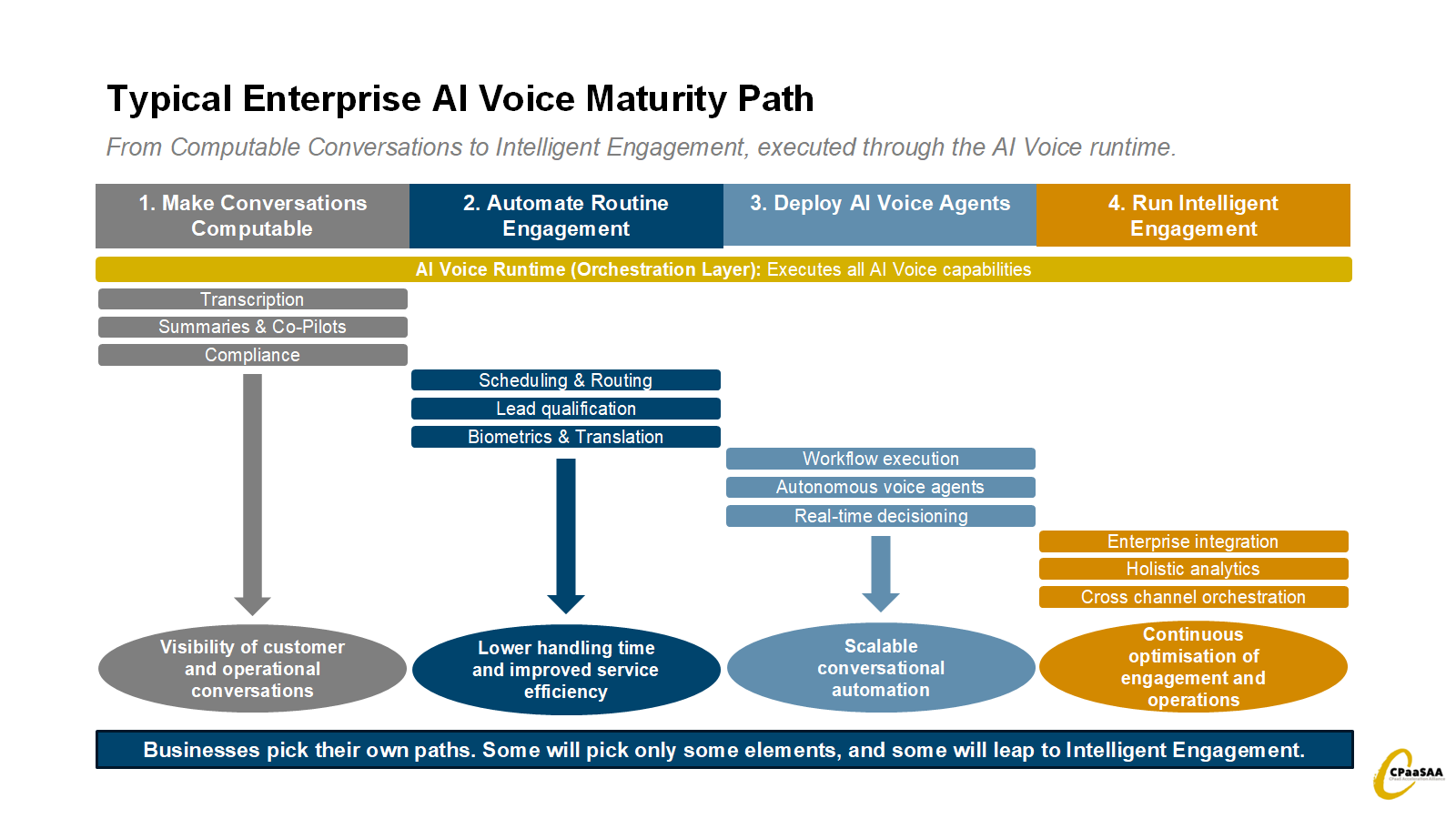

Adoption pattern: Most enterprises move from transcription and analytics toward automation and eventually autonomous AI Voice agents.

Most enterprises do not adopt AI Voice as a single large transformation. Instead, adoption typically follows a staged path as organisations first make conversations computable, then automate routine interactions, before eventually deploying autonomous AI agents and integrated engagement systems.

Each stage increases both the value of conversational data and the strategic importance of the runtime that orchestrates it.

Translation

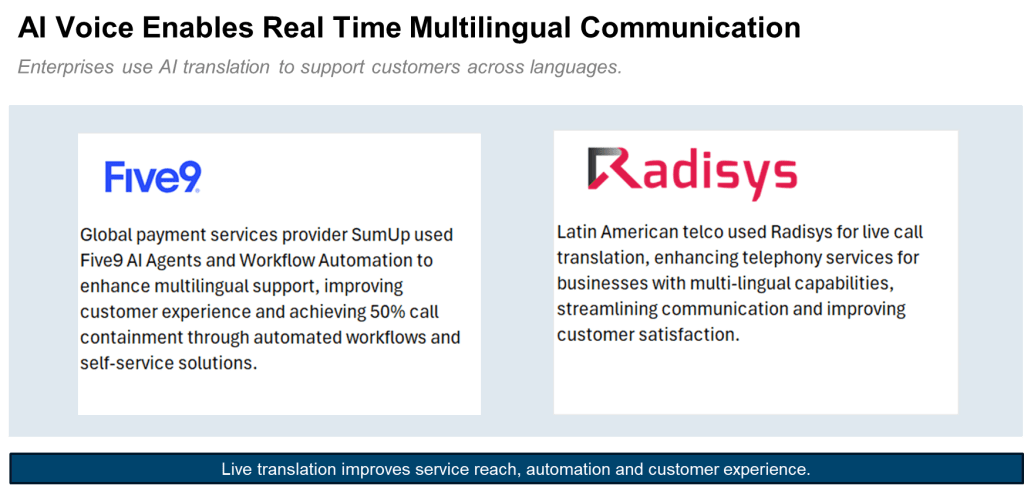

Once transcription is in place – and sometimes as a first essential application, call translation is a natural progression for AI Voice, given the rapid development of translation abilities of many LLMs. (NB note also Telco Network AI Voice section.)

Source: CPaaSAA Case Directory

NB. Call Containment = automated call completion without escalation.

The emergence of real-time voice translation in both enterprise platforms and telecom networks indicates a broader shift toward “AI in every call”, where AI capabilities are invoked dynamically during conversations rather than embedded only in contact centre applications.

Biometrics and Sentiment Analysis

AI models can be used to identify speakers and analyse the emotional tone of voices etc. The following is an example of biometrics used in identity.

Source: CPaaSAA Case Directory

“The real shift is from scripted responses to systems that can interpret intent, maintain context, and handle the variability of real human speech, including accent, emotion, and ambiguity.”

Melvin Maahen, Senior Product Manager, 8X8

Analytics

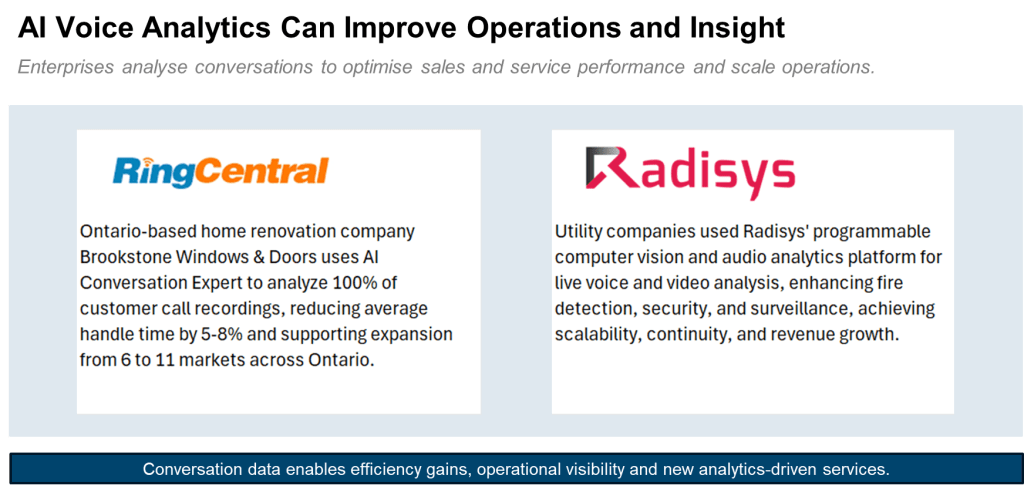

Adding analytics and intelligence to voice with AI is a natural step. The following case study summaries are two examples of where analytics can be used on specific call and engagement instances. The benefits are to increase quality, scalability, and growth, and reduce handling times.

Source: CPaaSAA Case Directory

Many platform vendors are now positioning conversation intelligence as a core capability of communications systems rather than a separate analytics layer. Demonstrations at Enterprise Connect 2026 showed analytics engines monitoring 100% of customer interactions in real time and generating insights during live calls.

Holistic, real-time analysis and action

The next level of sophistication is where the connversational data in AI Voice can be combined with other insights and information to build holistic insights and actions in real-time.

There are further examples of analytics and automation and their benefits in the following section on vCons..

vCons: Context, Compliance and Competitive Advantage

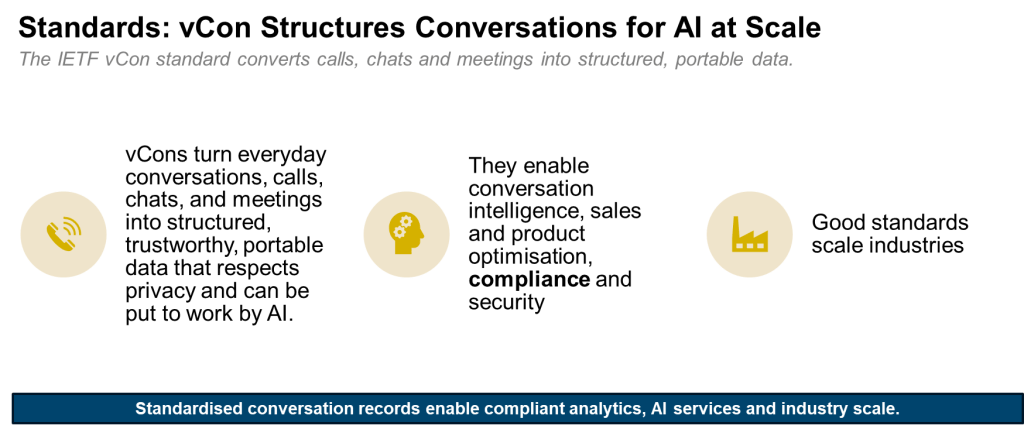

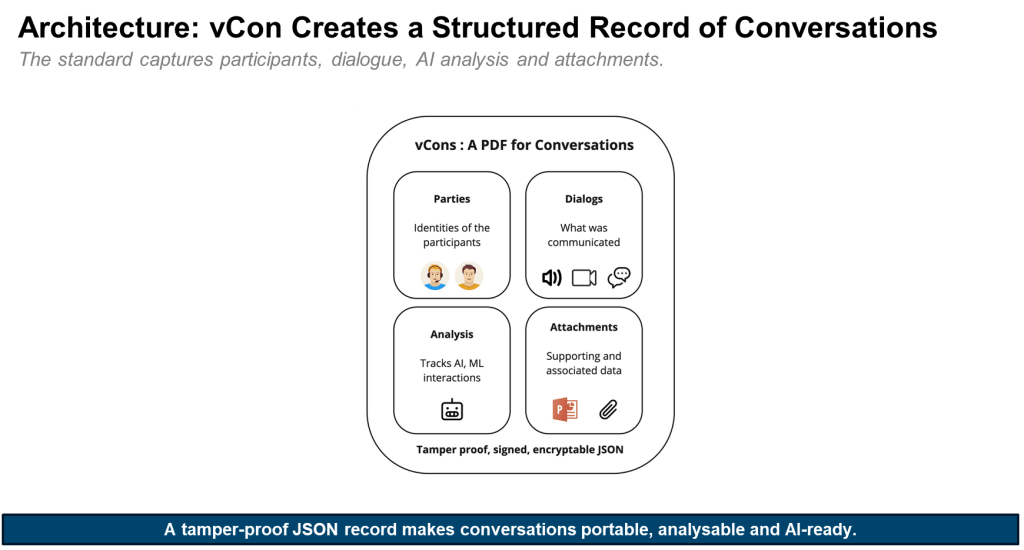

vCons are a new IETF (Internet Engineering Task Force) standard for data relating to virtual conversations – hence vCon. It was pioneered by Thomas McCarthy-Howe, CTO of vConic and CPaaSAA member, and others, as a means to help store data in a way that both humans and AI can intelligently and compliantly access.

vCons present a significant new opportunity to further develop data and AI Voice services by turning everyday conversations, calls, chats, and meetings into structured, trustworthy, portable data that respects privacy and can be put to work by AI.

In Thomas’s words “vCons turn messy, unstructured conversations into clean, structured data. They surface the dark operational data that’s hidden in everyday customer interactions and convert it into high‑quality ‘robot food’ for AI, so you can finally use those conversations to run the business better.”

The vCons standard provides two fundamental opportunities for Intelligent Engagement players. It:

- Standardises the process of designing and managing such data processes, saving practitioners the time of resolving this core challenge

- Makes it possible to ensure compliance with global and national legislation such as GDPR despite the complex interconnection of data points involved in a holistic analysis

Source: CPaaSAA Research

“A vCon is the container for data and information relating to a real-time, human conversation. It is analogous to a [vCard] which enables the definition, interchange and storage of an individual’s various points of contact. The data contained in a vCon may be derived from any multimedia session, traditional phone call, video conference, SMS or MMS message exchange, webchat or email thread.”

Source: IETF, The vCon – Conversation Data Container – Overview.

Source: CPaaSAA research, Conserver.io

Using vCons within a process called SCITT (Supply Chain Integrity, Transparency, and Trust) enables an organisation to store customer conversation data from all types of media, check it, analyse it, act on it, and if required, remove it in a GDPR-compliant manner.

The following vCon case studies demonstrate the additional insight and value unlocked by analysing information across all elements of the conversation, typically identifying issues and actions invisible through other systems (e.g. traditional CRM).

Source: CPaaSAA Case Directory

While many steps on the AI Voice journey produce incremental and sometimes transformative results, the step to engaging such holistic data management, analysis and action-taking (whether automated or manual) is an evolutionary leap.

For some customers, that is a leap that can and must be made immediately, and for others (typically those with less pressing market pressure and more embedded legacy investments) it is a journey that must be taken step-by-step.

Our next series of extracts will explore current customer adoption and market forecasts for AI Voice.

Meanwhile, you can download the full AI Voice report here.

Andrew Collinson

Andrew Collinson is a telecoms and connected technologies expert, specializing in growth strategy, research, and thought leadership. As founder of Connective Insights, he helps clients translate new technologies into viable business models, with a focus on CPaaS, APIs, platform strategies, AI, and network automation.

Before joining CPaaSAA as Associate Research Partner, Andrew was Research and Commercial Director at STL Partners for 15 years, leading a successful research business. He also moderates events, conducts bespoke research, and advises on telecom innovation, stakeholder dynamics, and digital transformation.

Comments are closed